Bhattacharyya distance

Several indices have been suggested in the statistical literature to reflect the degree of dissimilarity between any two probability distributions (cf. Probability distribution). Such indices have been variously called measures of distance between two distributions (see [a1], for instance), measures of separation (see [a2]), measures of discriminatory information [a3], [a4], and measures of variation-distance [a5]. While these indices have not all been introduced for exactly the same purpose, as the names given to them imply, they have the common property of increasing as the two distributions involved "move apart" . An index with this property may be called a measure of divergence of one distribution from another. A general method for generating measures of divergence has been discussed in [a6].

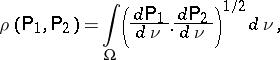

The Bhattacharyya distance is a measure of divergence. It can be defined formally as follows. Let  be a measure space, and let

be a measure space, and let  be the set of all probability measures (cf. Probability measure) on

be the set of all probability measures (cf. Probability measure) on  that are absolutely continuous with respect to

that are absolutely continuous with respect to  . Consider two such probability measures

. Consider two such probability measures  and let

and let  and

and  be their respective density functions with respect to

be their respective density functions with respect to  .

.

The Bhattacharyya coefficient between  and

and  , denoted by

, denoted by  , is defined by

, is defined by

|

where  is the Radon–Nikodým derivative (cf. Radon–Nikodým theorem) of

is the Radon–Nikodým derivative (cf. Radon–Nikodým theorem) of  (

( ) with respect to

) with respect to  . It is also known as the Kakutani coefficient [a9] and the Matusita coefficient [a10]. Note that

. It is also known as the Kakutani coefficient [a9] and the Matusita coefficient [a10]. Note that  does not depend on the measure

does not depend on the measure  dominating

dominating  and

and  .

.

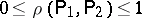

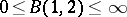

It is easy to verify that

i)  ;

;

ii)  if and only if

if and only if  ;

;

iii)  if and only if

if and only if  is orthogonal to

is orthogonal to  .

.

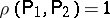

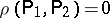

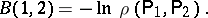

The Bhattacharyya distance between two probability distributions  and

and  , denoted by

, denoted by  , is defined by

, is defined by

|

Clearly,  . The distance

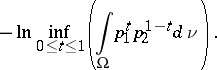

. The distance  does not satisfy the triangle inequality (see [a7]). The Bhattacharyya distance comes out as a special case of the Chernoff distance (taking

does not satisfy the triangle inequality (see [a7]). The Bhattacharyya distance comes out as a special case of the Chernoff distance (taking  ):

):

|

The Hellinger distance [a8] between two probability measures  and

and  , denoted by

, denoted by  , is related to the Bhattacharyya coefficient by the following relation:

, is related to the Bhattacharyya coefficient by the following relation:

|

is called the Bhattacharyya distance since it is defined through the Bhattacharyya coefficient. It should be noted that the distance defined in a statistical context by A. Bhattacharyya [a11] is different from

is called the Bhattacharyya distance since it is defined through the Bhattacharyya coefficient. It should be noted that the distance defined in a statistical context by A. Bhattacharyya [a11] is different from  .

.

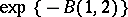

The Bhattacharyya distance is successfully used in engineering and statistical sciences. In the context of control theory and in the study of the problem of signal selection [a7],  is found superior to the Kullback–Leibler distance (cf. also Kullback–Leibler-type distance measures). If one uses the Bayes criterion for classification and attaches equal costs to each type of misclassification, then it has been shown [a12] that the total probability of misclassification is majorized by

is found superior to the Kullback–Leibler distance (cf. also Kullback–Leibler-type distance measures). If one uses the Bayes criterion for classification and attaches equal costs to each type of misclassification, then it has been shown [a12] that the total probability of misclassification is majorized by  . In the case of equal covariances, maximization of

. In the case of equal covariances, maximization of  yields the Fisher linear discriminant function. The Bhattacharyya distance is also used in evaluating the features in a two-class pattern recognition problem [a13]. Furthermore, it has been applied in time series discriminant analysis [a14], [a15], [a16], [a17].

yields the Fisher linear discriminant function. The Bhattacharyya distance is also used in evaluating the features in a two-class pattern recognition problem [a13]. Furthermore, it has been applied in time series discriminant analysis [a14], [a15], [a16], [a17].

See also [a18] and the references therein.

References

| [a1] | B.P. Adhikari, D.D. Joshi, "Distance discrimination et résumé exhaustif" Publ. Inst. Statist. Univ. Paris , 5 (1956) pp. 57–74 |

| [a2] | C.R. Rao, "Advanced statistical methods in biometric research" , Wiley (1952) |

| [a3] | H. Chernoff, "A measure of asymptotic efficiency for tests of a hypothesis based on the sum of observations" Ann. Math. Stat. , 23 (1952) pp. 493–507 |

| [a4] | S. Kullback, "Information theory and statistics" , Wiley (1959) |

| [a5] | A.N. Kolmogorov, "On the approximation of distributions of sums of independent summands by infinitely divisible distributions" Sankhyā , 25 (1963) pp. 159–174 |

| [a6] | S.M. Ali, S.D. Silvey, "A general class of coefficients of divergence of one distribution from another" J. Roy. Statist. Soc. B , 28 (1966) pp. 131–142 |

| [a7] | T. Kailath, "The divergence and Bhattacharyya distance measures in signal selection" IEEE Trans. Comm. Techn. , COM–15 (1967) pp. 52–60 |

| [a8] | E. Hellinger, "Neue Begrundung der Theorie quadratischer Formen von unendlichvielen Veränderlichen" J. Reine Angew. Math. , 36 (1909) pp. 210–271 |

| [a9] | S. Kakutani, "On equivalence of infinite product measures" Ann. Math. Stat. , 49 (1948) pp. 214–224 |

| [a10] | K. Matusita, "A distance and related statistics in multivariate analysis" P.R. Krishnaiah (ed.) , Proc. Internat. Symp. Multivariate Analysis , Acad. Press (1966) pp. 187–200 |

| [a11] | A. Bhattacharyya, "On a measure of divergence between two statistical populations defined by probability distributions" Bull. Calcutta Math. Soc. , 35 (1943) pp. 99–109 |

| [a12] | K. Matusita, "Some properties of affinity and applications" Ann. Inst. Statist. Math. , 23 (1971) pp. 137–155 |

| [a13] | Ray, S., "On a theoretical property of the Bhattacharyya coefficient as a feature evaluation criterion" Pattern Recognition Letters , 9 (1989) pp. 315–319 |

| [a14] | G. Chaudhuri, J.D. Borwankar, P.R.K. Rao, "Bhattacharyya distance-based linear discriminant function for stationary time series" Comm. Statist. (Theory and Methods) , 20 (1991) pp. 2195–2205 |

| [a15] | G. Chaudhuri, J.D. Borwankar, P.R.K. Rao, "Bhattacharyya distance-based linear discrimination" J. Indian Statist. Assoc. , 29 (1991) pp. 47–56 |

| [a16] | G. Chaudhuri, "Linear discriminant function for complex normal time series" Statistics and Probability Lett. , 15 (1992) pp. 277–279 |

| [a17] | G. Chaudhuri, "Some results in Bhattacharyya distance-based linear discrimination and in design of signals" Ph.D. Thesis Dept. Math. Indian Inst. Technology, Kanpur, India (1989) |

| [a18] | I.J. Good, E.P. Smith, "The variance and covariance of a generalized index of similarity especially for a generalization of an index of Hellinger and Bhattacharyya" Commun. Statist. (Theory and Methods) , 14 (1985) pp. 3053–3061 |

Bhattacharyya distance. Encyclopedia of Mathematics. URL: http://encyclopediaofmath.org/index.php?title=Bhattacharyya_distance&oldid=15124